- Published on

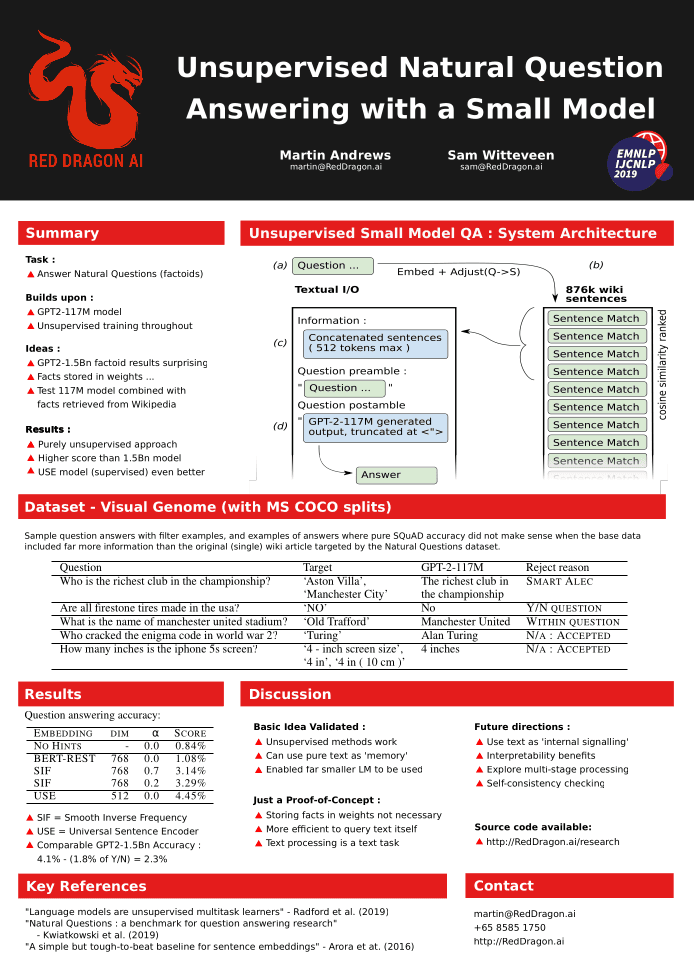

Unsupervised Natural Question Answering with a Small Model

- Authors

- Name

- Martin Andrews

- @mdda123

This paper was accepted to the FEVER2.0 workshop at EMNLP-IJCNLP-2019 in Hong Kong.

Abstract

The recent (2019-02) demonstration of the power of huge language models such as GPT-2 to memorise the answers to factoid questions raises questions about the extent to which knowledge is being embedded directly within these large models. This short paper describes an architecture through which much smaller models can also answer such questions - by making use of ‘raw’ external knowledge. The contribution of this work is that the methods presented here rely on unsupervised learning techniques, complementing the unsupervised training of the Language Model. The goal of this line of research is to be able to add knowledge explicitly, without extensive training.

Poster Version

Link to Paper

And the BiBTeX entry for the arXiv version:

@article{DBLP:journals/corr/abs-1911-08340,

author = {Martin Andrews and

Sam Witteveen},

title = {Unsupervised Natural Question Answering with a Small Model},

journal = {CoRR},

volume = {abs/1911.08340},

year = {2019},

url = {http://arxiv.org/abs/1911.08340},

eprinttype = {arXiv},

eprint = {1911.08340},

timestamp = {Mon, 02 Dec 2019 17:48:37 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1911-08340.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}